I got myself a NAS with two 1 GbE ports and a link aggregation support, so naturally I had to try whether it will work with my Omnia. I’m writing this because I haven’t found much information on this topic apart from a failed attempt described here.

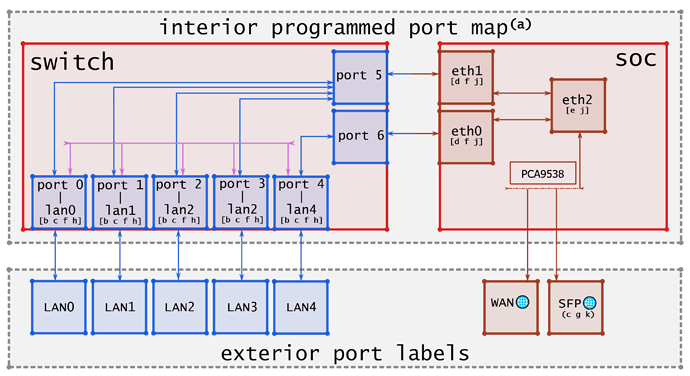

Let’s start with my understanding of the hardware setup and the interconnection of components on Omnia. The SoC has three 3 interfaces (eth0, eth1, and eth2). The switch chip comprises five front-panel ports and two CPU ports. SoC interface eth2 is connected to WAN, eth0 and eth1 are connected to the switch chip CPU ports, specifically to ports 5 and 6. All ports are 1 GbE. I have found a nice schematic here on this forum:

I believe there is no support for LAG in the switch HW, but TOS supports the Linux Ethernet bonding driver. This means that all the LAG related traffic will be routed through the SoC and processed by the kernel.

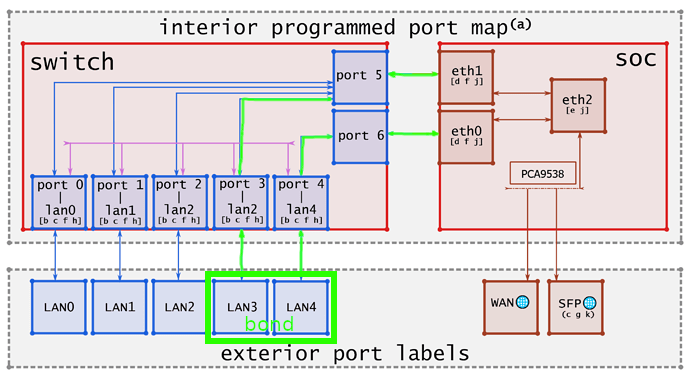

In order to utilize both SoC ↔ switch links, one of the slave interfaces must use port 4 (LAN4). This is due to the internal switch port interconnection (I don’t know whether it’s programmable or not):

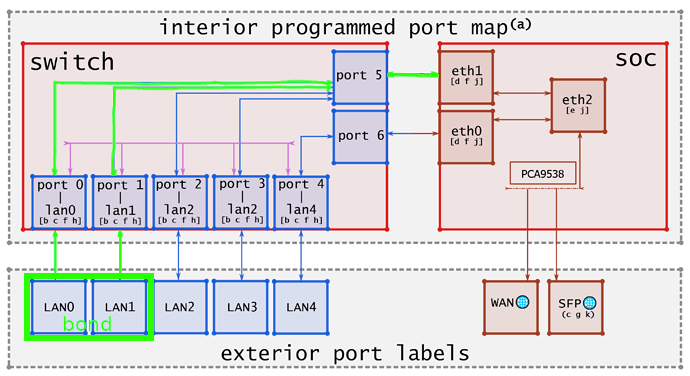

Not using port 4 results in the entire LAG traffic going through the port 5 ↔ eth1 link only, effectively capping throughput to 1 Gbit:

Both devices must be properly configured. In Synology DSM, it takes only a few clicks to create the bonded interface. Four different bonding modes are supported, but I’ve tried only 802.3ad.

On Turris, the bonding driver must first be installed and loaded:

opkg install kmod-bonding

modprobe bonding

Loading the module will create interface bond0. The bonding driver may be configured via the sysfs interface. This allows dynamic configuration of all bonds in the system without unloading the module:

echo 802.3ad >/sys/class/net/bond0/bonding/mode

echo 100 >/sys/class/net/bond0/bonding/mode/miimon

I then made a bond of the two interfaces:

ip link set bond0 up

ip link set lan3 master bond0

ip link set lan4 master bond0

and added the bond to my LAN bridge:

ip link set bond0 master br-lan

At this point, the bond was up and running, the NAS was reachable, so it was time to benchmark. To check the throughput, I had an iPerf3 server running on the NAS and client on Turris. The result was, however, only 1 Gbit. All the traffic went through a single slave interface. Why? Because the slave selection for outgoing traffic is done according to a transmit hash policy, and the default algorithm will place all traffic to a particular network peer on the same slave. It can be changed to a policy that allows for traffic to a particular network peer to span multiple slaves, although multiple connection must be used:

echo layer3+4 >/sys/class/net/bond0/bonding/xmit_hash_policy

and I was finally able to achieve almost 2 Gbit throughput, hooray:

# iperf3 --client 192.168.1.100 --parallel 2

...

[ ID] Interval Transfer Bitrate Retr

[SUM] 0.00-10.00 sec 2.17 GBytes 1.86 Gbits/sec 0 sender

[SUM] 0.00-10.01 sec 2.17 GBytes 1.86 Gbits/sec receiver

Quite useless, but it works! Well, only partially. I can reach the NAS from Turris and from WLAN, but it’s unreachable from devices connected to the other LAN switch ports. These devices are not receiving ARP responses from the NAS, and I have no idea why.

Feel free to share any thoughts on this topic!